How film production has changed over time

Published 3:45 pm Monday, March 13, 2023

Clarence Sinclair Bull // Getty Images

How film production has changed over time

Since they were first invented, movies have become not just a source of entertainment but a place to discover parts of yourself, vehicles to pose philosophical questions, and tools to pursue social change. People tend to track the development of film via its creative movements, but equally interesting are the changes and technological progress made beyond what’s on screen.

Movies aren’t just the work of actors but also crew members, production studios, distribution companies, and the industry at large. Innovations in any part of a movie production can also mean big business. After all, the industry supports 2.4 million jobs in the United States, paying over $186 billion in wages annually. It is the largest film entertainment market in the world, earning almost $21 billion, followed by China ($7 billion) and Japan ($3.4 billion). The film industry is as varied and segmented as the people who help create each moviegoing experience. Each country has its own independent standards of production. This article looks at the broad strokes of global trends and innovation.

From cinema’s origins with the Lumiére brothers to the impact of auteurs like Walt Disney and Stanley Kubrick, and finally, to the technological innovation of the 2000s, Giggster compiled 15 moments that altered the state of film production using resources like Screen Rant, Collider, and StudioBinder.

![]()

Visual Studies Workshop // Getty Images

The invention of cinema and a voyage to the moon

Most people have likely heard the classic tale of early cinema audiences being so startled by the moving picture of a train that they panicked and ran out of the theater. That story is likely an urban legend—but the film they watched is very real, thanks to the 1891 invention of the Kinetoscope, which harnessed moving pictures.

“L’Arrivée d’un train en gare de La Ciotat (Arrival of a Train at La Ciotat)” was directed by the Lumière brothers, a French duo widely considered to be the inventors of cinema. It encapsulates the excitement at an art form that could accurately mirror the real world. At this time, however, the Lumières and other rising filmmakers like Frenchman Georges Méliès were seemingly more interested in telling fictional stories than just documenting life around them.

The first narrative film from the Lumières was the story of a child’s prank titled “L’Arroseur Arrosé (The Waterer Watered)” in 1895, but even more famous is Méliès’ “Voyage Dans La Lune (Trip to the Moon)” in 1902 based on Jules Verne’s “From the Earth to the Moon.” This iconic short film only has a 14-minute runtime, but its fantastical sets and surprisingly advanced practical effects showed audiences the kind of innovation inherent to the film medium.

Hulton Archive // Getty Images

The height of the silent film era

The early 1900s saw the rise of a global film industry centered around producing feature-length black-and-white silent films, with places like Europe and Russia standing out in terms of quality and number of productions. It wasn’t until 1915 that the highly utilized Technicolor process was invented, although its initial form was costly and laborious, and thus multicolor films didn’t become common until the 1930s.

Exciting innovations in the silent film form came out of Germany post-World War I due to the rise of the German expressionist movement, which used dark shadows, strange angles, and often dealt with distorted realities as seen in Fritz Lang’s “Metropolis” in 1927 and Robert Wiene’s “The Cabinet of Dr. Caligari” in 1920. This movement contrasts American silent films, which were more natural in style and instead focused on developing film as a universal visual language.

Some standout names from this time were Charlie Chaplin and Buster Keaton, who both utilized film as an ideal way to harness visual comedy—Keaton took this to particular extremes, jumping from rooftops and having a real house dropped on himself in “Steamboat Bill, Jr.”

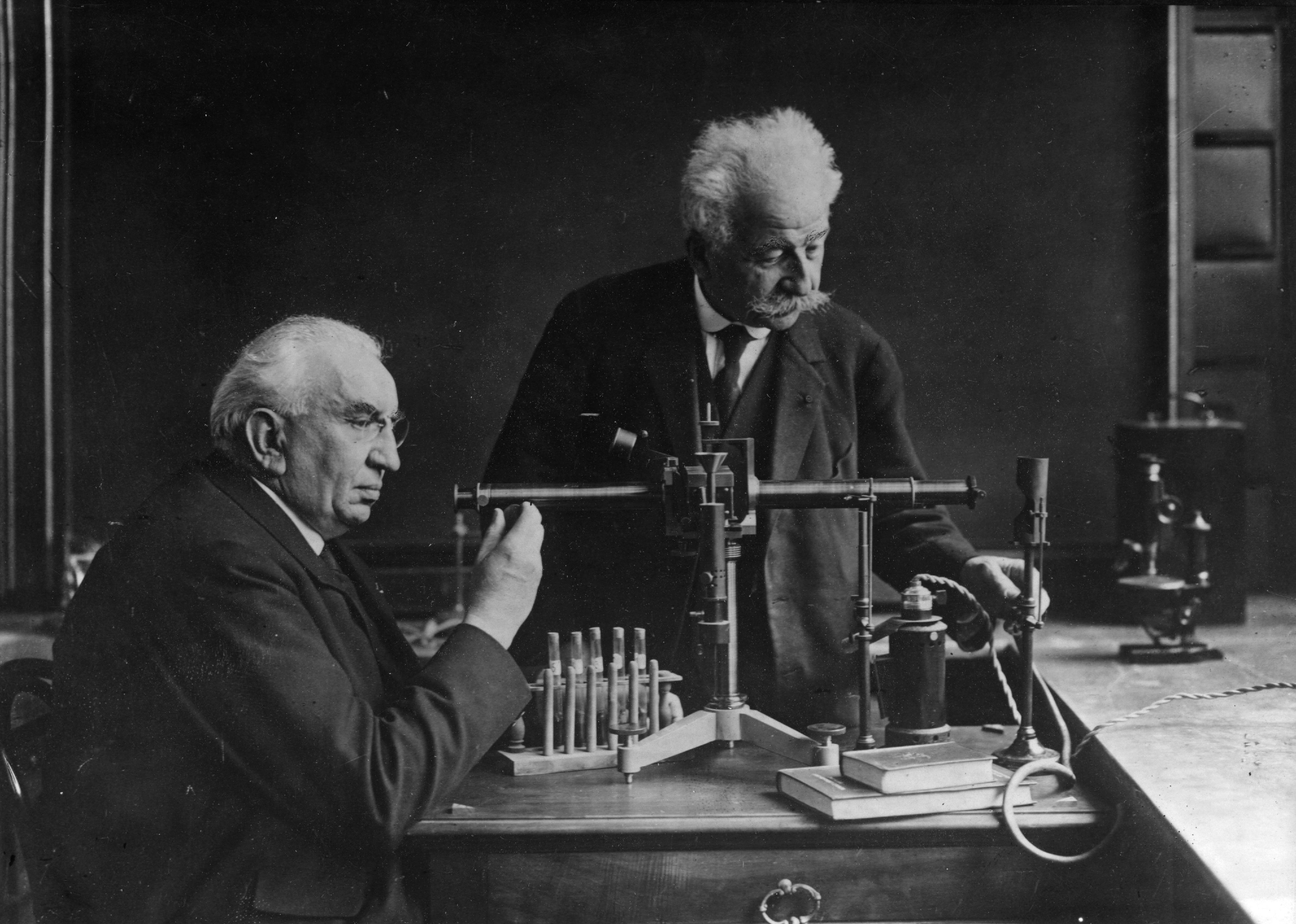

Sovfoto // Getty Images

Sergei Eisenstein, the father of montage theory

Another master of the silent film, Sergei Eisenstein is a Soviet director most known for his 1925 film “Battleship Potemkin,” which follows Russian soldiers who mutiny against their oppressive superiors. This film is celebrated for exploring working-class political struggles and the brilliant ways the director harnesses the cinematic language, manifested in the iconic Odessa steps scene.

Eisenstein is a product of the early Soviet film industry, which emphasized editing as a narrative device. As such, he was one of the first filmmakers to use the montage—stringing several shots together for a particular narrative purpose—instead of solely relying on a wide shot that covered everything as was commonplace at the time. He spent much time writing and theorizing about the benefits of editing in a montage fashion. He applied montage in two separate periods of his career: montage for mass dramas, which he used to illustrate the plights of the proletariat class, and montage for individuals, which uses the editing technique to tell intimate personal stories.

Warner Brothers // Getty Images

‘The Jazz Singer’ and the talkie

Damien Chazelle’s 2022 Hollywood epic “Babylon” tracks the shift from silent film to so-called “talkies,” or films synched with sound, which allows actors on screen to talk. “Babylon” included a shot from the actual movie that changed everything: Alan Crosland’s “The Jazz Singer.” “The Jazz Singer” was a Warner Bros. production released in 1927 using groundbreaking technology: the Vitaphone.

The Vitaphone harnessed the gramophone, a music player and sound recorder invented and popularized in 1877, which recorded the film’s sound while the camera was rolling. As a result, the film used a combination of synchronized speech and lip-synced singing to achieve its unprecedented sound, mainly highlighting “The Jazz Singer” lead actor Al Jolson’s voice as he sang six different songs. This didn’t mark the end of silent films as their production continued for several years following the film’s release, but the transition to talkies was the next big thing in film history.

Bettmann // Getty Images

The Hays Code

Contemporary audiences are no strangers to the Motion Picture Association rating system that labels films as G, PG, PG-13, R, etc. However, before that system was implemented in 1968, the MPA had a much stricter code implemented on Hollywood films titled the “Hays Code,” named after Will H. Hays, a Presbyterian deacon and the first president of what would be known as the Motion Picture Association of America.

Under his guidance, major film studios worked together to create a list of subject matters that were either forbidden from being shown or were heavily implied not to be shown in films meant for wide release—entries on this list include profanity, suggestive nudity, interracial relationships, mocking of religion, and sexual perversions. Versions of this code existed since 1930 but were largely ignored due to a lack of repercussions, so when the code was officially enforced in 1934, it came with the creation of a Production Code Administration that had to approve every single film before release.

Those who violated the code were blacklisted, and their work was often lost to time. For example, Mabel Normand, who first directed Charlie Chaplin as the Tramp and was the first person to break the fourth wall, was known as the “mother of comedy.” Yet, her connection with Roscoe “Fatty” Arbuckle (an actor embroiled in a murder scandal) got her ousted from Hollywood.

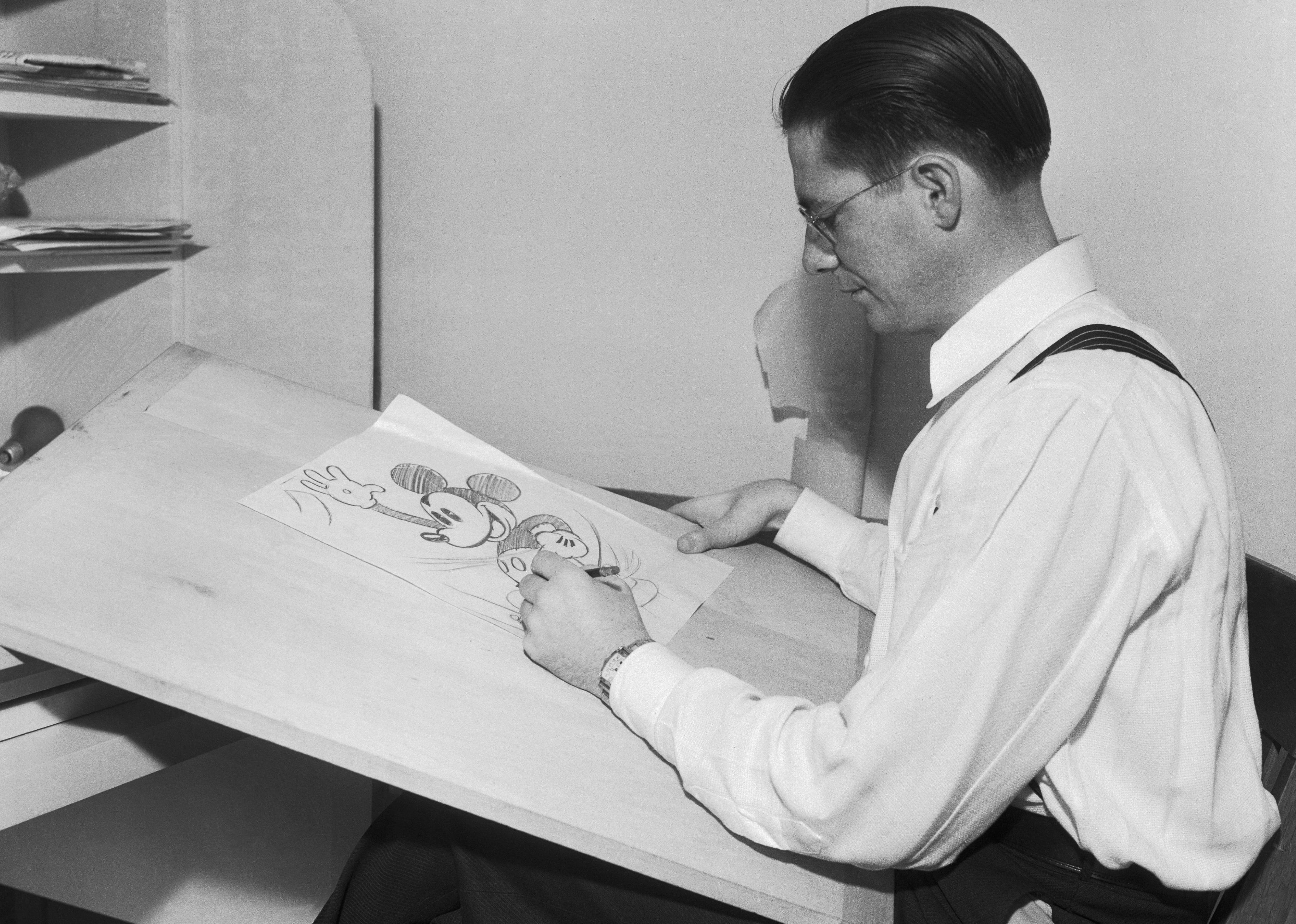

Bettmann // Getty Images

Disney’s origins and the animated feature film

1923 saw the official creation of Walt Disney Studios, originally called the Disney Brothers Cartoon Studio, where Walt and Roy Disney produced animated short films. These films include several “Alice in Wonderland”-inspired shorts, “Steamboat Willie,” which introduced Mickey Mouse, and the “Silly Symphonies” musical series, which were all accomplished through cel animation.

Cel animation was the golden standard for 2D animation, wherein each film frame is drawn and colored on a sheet of celluloid, which is layered in front of a separately drawn background. These cels are then photographed and played back in sequence, creating an illusion of movement. It is an incredibly detailed and time-consuming process that, to most people in the 1930s, made the idea of an animated feature film seem impossible.

However, Walt Disney was not like most people and tasked his team of animators to create the 1937 film “Snow White and the Seven Dwarfs,” Disney’s first animated feature film. Creating the film lasted three years and took “32 animators, 1032 assistants, 107 inbetweeners, 10 layout artists, 25 background artists, 65 special effects animators, and 158 inkers and painters and countless production staff.” Its $1.4 million cost (way over the initial $250,000 to $500,000 budget) almost bankrupted the studio. Still, the final result was worth it as “Snow White” swept across the globe, permanently raising the bar for the film and animation industries.

Bettmann // Getty Images

‘Citizen Kane’ and the cinematic language

1941’s “Citizen Kane” is widely considered one of if not the best films ever made, ranking #1 in Sight and Sound’s Greatest Films of All Time poll for 50 consecutive years (only surpassed in the past two polls by Alfred Hitchcock’s “Vertigo” and Chantal Akerman’s “Jeanne Dielman, 23, quai du Commerce, 1080 Bruxelles”).

Co-written, directed, acted, and produced by Orson Welles, “Citizen Kane” marked his first foray into feature filmmaking. It’s not that anyone could tell because Welles and his cinematographer, Gregg Toland, already demonstrated a mastery of the form. Critic Kenneth Tynan described Welles and Toland’s inventions as providing audiences with “an adult vocabulary” for cinema. The duo turned away from the artificial aesthetic found in movies of this time, striving instead for a film that mirrored real life in appearance. This was accomplished through inventive angle usage, long takes, deep focus (where subjects in the foreground and background are both in focus), and “chiaroscuro” lighting, which refers to high-contrast lighting that creates deep highlights and shadows.

SeM/Universal Images Group // Getty Images

Italian neorealism

World War II saw various governments across the globe work in conjunction with film studios to create propaganda films in an attempt to shift public perspectives of the war. While Hollywood was one of the key players in this, Italy’s Cinecittà Studios similarly churned out propaganda until bombings by Allied Forces partially destroyed it.

When the war ended, the landscape of Italian cinema was permanently altered—filmmakers who’d previously only worked in the studio setting now found themselves without resources and with grittier stories to tell. Their response was to create the Italian neorealist film movement, which saw films made with the bare minimum of crew members and equipment, and nonprofessional actors with the aim of capturing real life as accurately as possible.

Stories often followed everyday working-class protagonists and explored Italy’s social and political destruction under and after Benito Mussolini. This marks a very pointed departure from the Hollywood style of filmmaking and helped develop Third cinema, where filmmakers reject American blockbusters and European aesthetic films to create realistic political cinema where the camera is in the hands of the people.

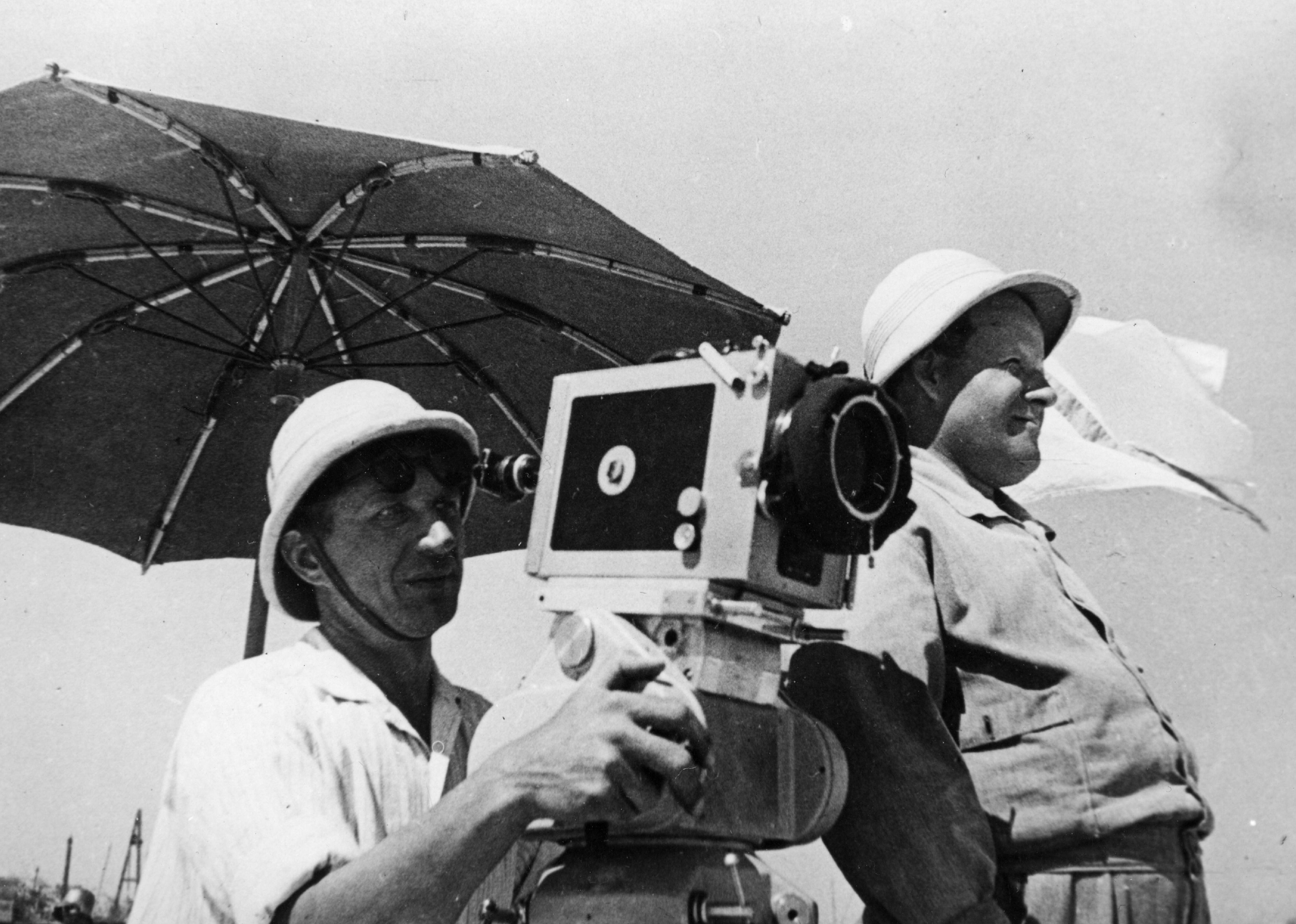

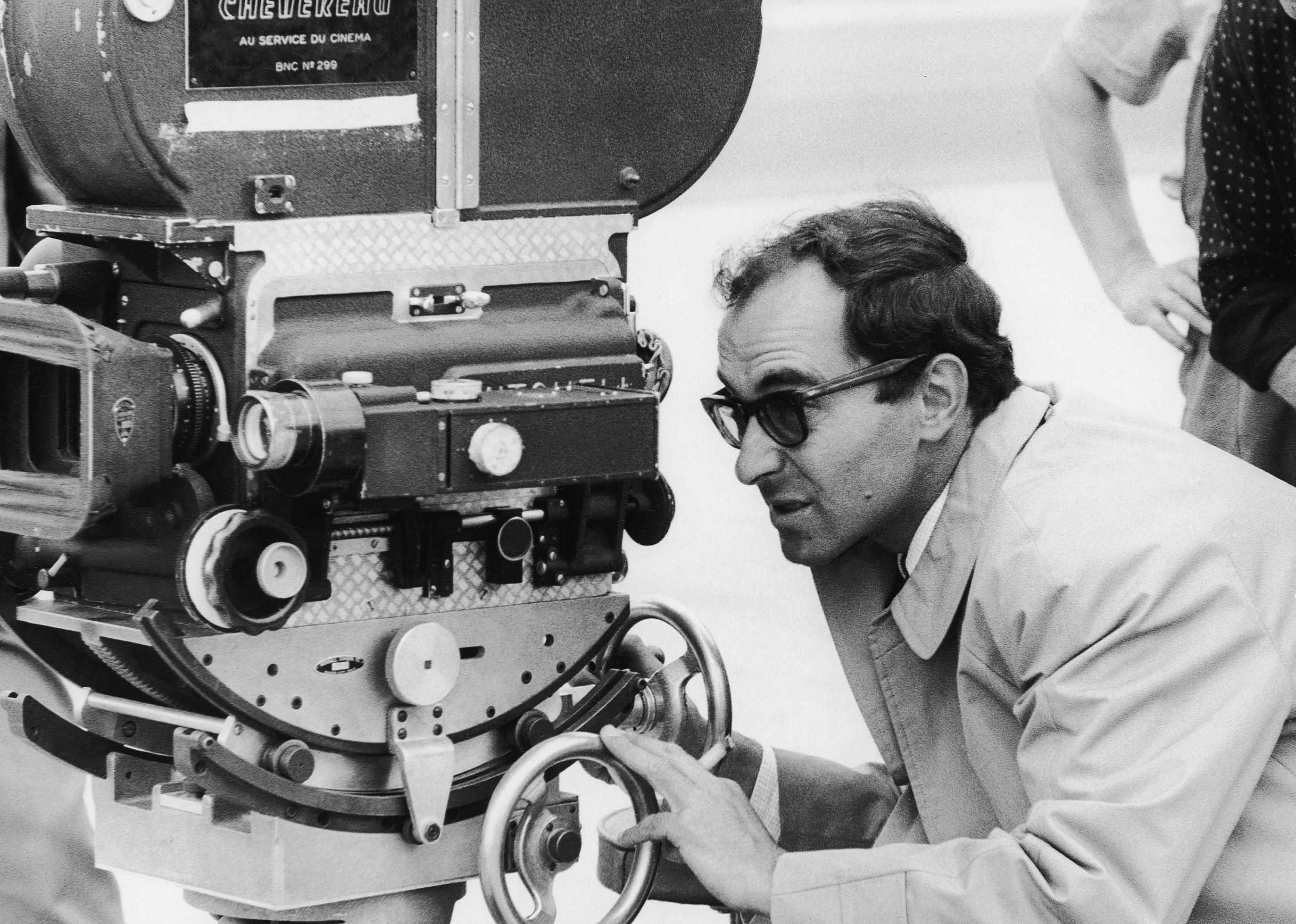

ullstein bild // Getty Images

French New Wave

Much like the 1940s Italian film industry, the cinema of post-World War II France had to make do with severely constrained budgets; however, filmmakers looked to the neorealist movement before them and were inspired to utilize that lack of resources to redefine the cinematic form. French New Wave cinema of the ’50s used nonactors, real-world locations, DIY lighting and camera rigs and has become a major source of contemporary inspiration for the kinds of films that can be made with limited means.

More interesting than its resources, however, is how the French New Wave subverted the language of cinema by rejecting classical filmmaking. Using natural light freed up the camera and actors to improvise blocking, which, combined with a handheld camera, gave films a more realistic look. Much of their subversion came in the film’s edit, where snappy jump cuts and pans in conjunction with voiceovers and fourth wall breaks broke every cinematic “rule” at the time. Finally, French New Wave themes broke away from neorealism’s overt political ones to explore issues of existentialism and the ironies inherent to human existence instead.

Universal History Archive // Getty Images

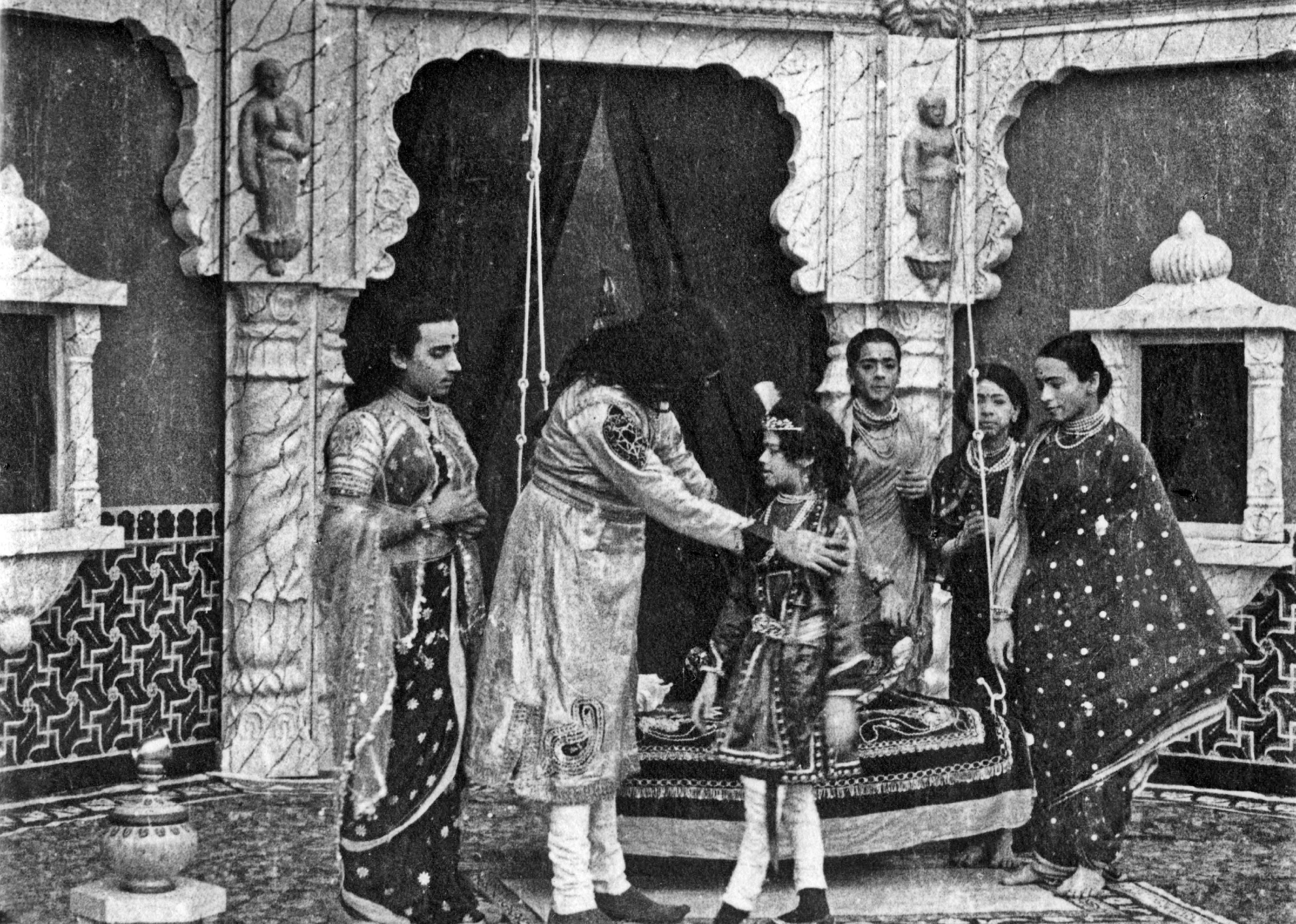

The Bollywood film industry

The name Bollywood immediately conveys images of vibrant colors, extravagant dances, and high-stakes melodrama; however, Bollywood cinema is not something that can be easily reduced to one stereotype. The name Bollywood is a mashup of Hollywood and Bombay, the retired name of Mumbai in India, and is a broad moniker for the Hindu-language film industry housed in Mumbai. This isn’t just any industry, however, as Bollywood, in conjunction with smaller regional industries, establishes India as the biggest film industry across the globe based on film output.

Bollywood cinema began with pioneer filmmaker Dadasaheb Phalke, who made his first Indian film in 1913 with “Raja Harishchandra,” but progressed slightly slower than Hollywood as its first talkie didn’t come out until 1931 with Ardeshir Irani’s “Alam Ara.” Bollywood continued to develop over the next 40 years, hitting its stride in Indian cinema’s golden age from the 1940s to 1960s, which explored sociopolitical themes via large-scale productions. The Bollywood film of today emerged around the 1970s. It established common tropes like the “masala” film, which fuses melodrama, musicals, and rom-coms, and action films exploring India’s organized crime. These films are more accessible than ever, thanks to streaming.

Keith Hamshere // Getty Images

Stanley Kubrick and science fiction

Famed director Stanley Kubrick only made 13 films over the course of his 46-year career. Still, each film tackled a wildly different story in a different genre while consistently pushing the limits of cinema. Cinematic heavyweights like Martin Scorsese, Steven Spielberg, Christopher Nolan, and James Cameron have all been vocal about their inspiration from and appreciation for Kubrick’s films, and that’s only naming a few.

Within the film community, there is much debate over which of Kubrick’s films is his best, but it’s safe to say 1968’s “2001: A Space Odyssey” is arguably the most impactful. Prior to the film’s release, the science fiction genre was established but not critically respected as most films were campy, playing with the same few tropes and lacking a deeper meaning. However, Kubrick’s vision of science fiction was a profound one, full of existentialism, questions of human nature, and an exploration of time.

A primary reason for this tonal shift is that, despite the high-concept futurism of the film, Kubrick chose to direct the film as realistically as possible, which involved incorporating real-life scientists and advisors from space agencies. Kubrick’s production was so accurate that, according to the New Yorker, a high-ranking NASA executive called Kubrick’s studio “NASA East.” NASA even has a page on its website detailing what elements of space travel “2001: A Space Odyssey” accurately portrayed.

Kevin Winter // Getty Images

James Cameron and the ‘Avatar’ effect

After 13 years, the “Avatar” sequel, “Avatar: The Way of the Water,” was released in 2022 to major financial success. But even with its excellent box office numbers, it still hasn’t come close to touching the profit margin of James Cameron’s 2009 blockbuster. The movie was the first ever to make $2.85 billion at the global box office and has remained the highest-grossing film since its release (only temporarily surpassed by “Avengers: Endgame”), but that’s hardly its most impactful trait.

“Avatar” revolutionized the 2000s film industry and set the stage for the massive CGI blockbusters that have become commonplace in its aftermath. Cameron decided to shoot on digital cameras at a time when film was still the norm, and only around 12% of American theaters were equipped to screen digital movies in 2008. It became the first film shot entirely on digital cameras to win the Best Cinematography Oscar.

“Avatar” also revitalized the idea of a 3D film. Cameron and equipment expert Vincent Pace created an advanced stereoscopic (two lenses recording two images mirroring the human eye) filming rig and a new motion capture technique that tracks actors’ facial muscles. Finally, to make “Avatar” believable, Cameron’s team had to create a realistic-looking alien world for the characters to inhabit, which entailed making advanced lighting systems, plus texturing and painting software.

Sunset Boulevard // Getty Images

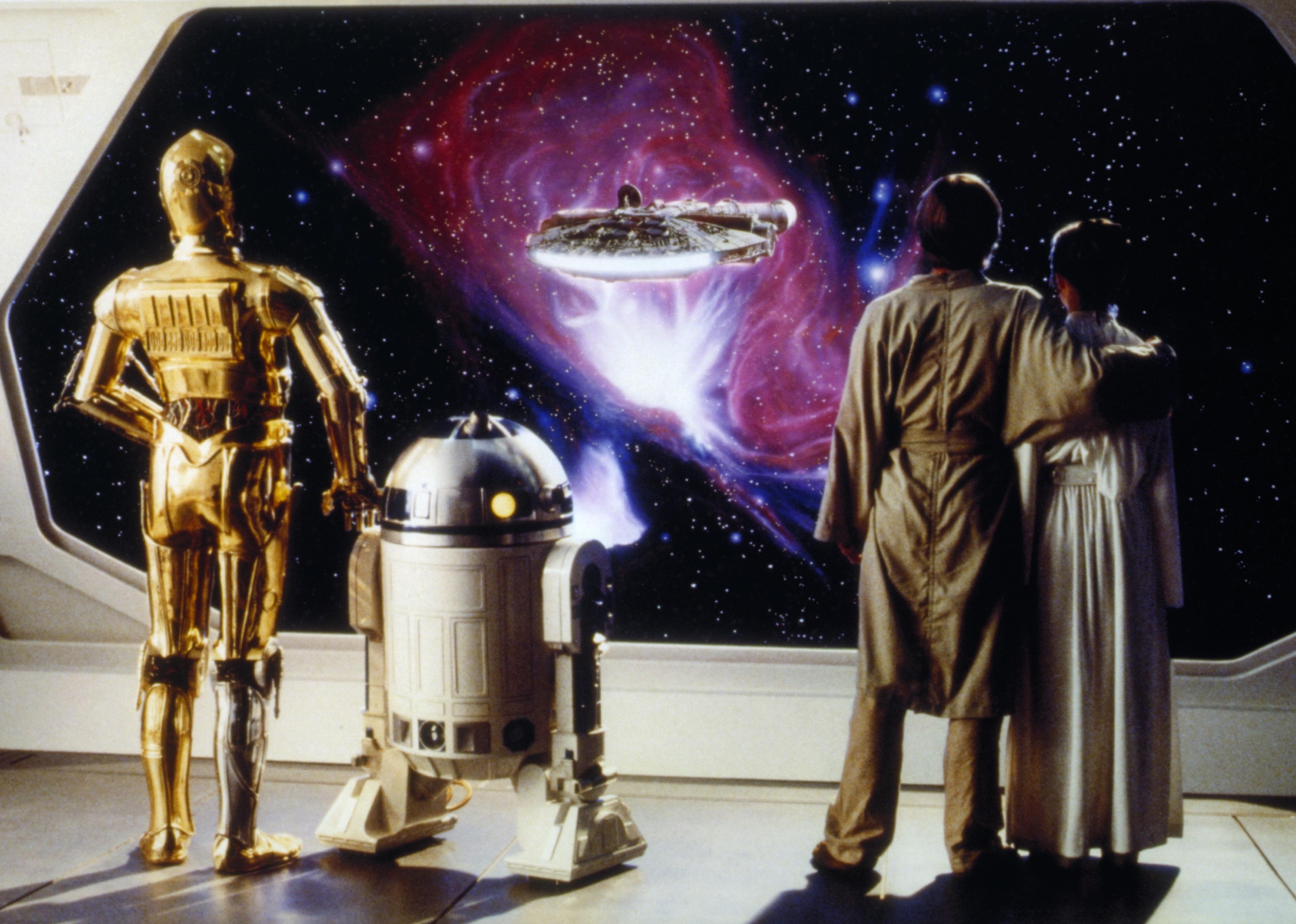

The popularization of the green screen

Green screens are an accessible modern technology used by filmmakers, YouTubers, and even included in services like Zoom. It’s also technology that has existed almost as long as film has. The first use of it traces back to hypnotist-turned-filmmaker George Albert Smith, whose technique was used in 1903’s “The Great Train Robbery” and later advanced by cinematographer Frank Williams for 1933’s “The Invisible Man.”

The 1970s saw many TV stations co-opt the green screen technology for live broadcasts, but it wasn’t until the ’80s that the technology became the high-powered VFX tool seen today. The classic sci-fi action film “Star Wars: Episode V – The Empire Strikes Back” spearheaded this development thanks to special effects advisor Richard Edlund creating a printer with incredibly high-quality optics that worked with blue screen (as opposed to the standard green). 1988 saw live-action actors and backgrounds interact with green-screened characters for the first time in “Who Framed Roger Rabbit,” paving the way for shows like “Game of Thrones” to create realistic dragons and monsters.

Gareth Cattermole // Getty Images

Marvel motion capture

In the time since the first official Marvel film in 2008 (“Iron Man”), the Marvel Cinematic Universe has seemingly overtaken the film industry in both its popularity and its vast output of films, with more than 40 MCU films as of February 2023. It was the first company to create a so-called “cinematic universe” wherein different films following different characters all happen in the same universe and sometimes cross over. It has spawned several other companies and franchises to follow in its footsteps, like the DC Extended Universe and even Star Wars with its newer spinoff films and series.

MCU movies normalized using extensive CGI special effects for almost every scene, rejecting practical effects and special effects makeup for green screens and motion capture. This has become Hollywood’s new standard for production: It’s easier and cheaper, but only because the visual effects artists used for these Marvel films are underpaid and overworked. In the process, Disney-owned Marvel Studios has become such a wealthy and powerful entity that it harms independent filmmakers whose original ideas are no longer considered viable. Should independent films find their way into theaters, their run dates are significantly shortened to keep MCU films playing longer.

EVGENIY SOFRONEYEV // Getty Images

ILM’s StageCraft and the rise of virtual production

The Star Wars franchise has long pushed technological advancements in film, thanks to George Lucas and special effects master John Dykstra, who led Industrial Light and Magic, the studio that pulled off the films’ otherworldly characters and environments. Despite more than 40 years in the business, the company is still pushing the boundaries of modern technology to new heights with its newest creation, StageCraft, which it used for “The Mandalorian.”

“The Mandalorian” was Disney’s first live-action Star Wars TV show, which was critically successful when it aired in 2019. Despite its success, audiences at the time were unaware of how impressive the show was. Using a traditional green screen would have presented a very specific problem for filming “The Mandalorian” as the protagonist is always seen in a full-body metal suit—it’d be incredibly difficult to mask all of the green reflections out and the reflections from the scene’s landscape in.

Enter StageCraft, a massive circular wall of LED screens that utilize 3D technology to create a moving, interactive backdrop for scenes to play out in. Unlike traditional backdrops, Stagecraft’s screens are linked via GPS to the camera. If the camera tilts left, the images on the screen’s perspective shift left as if they were filming in a real location. ILM has since rolled out permanent and temporary virtual production stages, the latest located near its facility in Vancouver, British Columbia.

Meanwhile, more and more companies are looking to get into the action with virtual production explored in studios worldwide, including the U.K. and Canada. Despite these advances, the technology is still incredibly young and continues to be expensive, and the industry is challenged to find more viable solutions so studios can stay afloat and film lovers can continue to get their fix of fantastical worlds and action-packed scenes.

This story originally appeared on Giggster and was produced and

distributed in partnership with Stacker Studio.